Are AI Chatbots Making Us Feel Worse?

A joint study by Open Ai and MIT is casting doubts on the chatbot hype.

AI companions are meant to help cure loneliness. Instead, they might just be making us more alone.

A new randomized controlled trial from researchers at MIT, Harvard, and OpenAI just dropped, and it's casting doubt on the warm-and-fuzzy chatbot hype.

The Setup: Chatbots as Mental Health Boosters

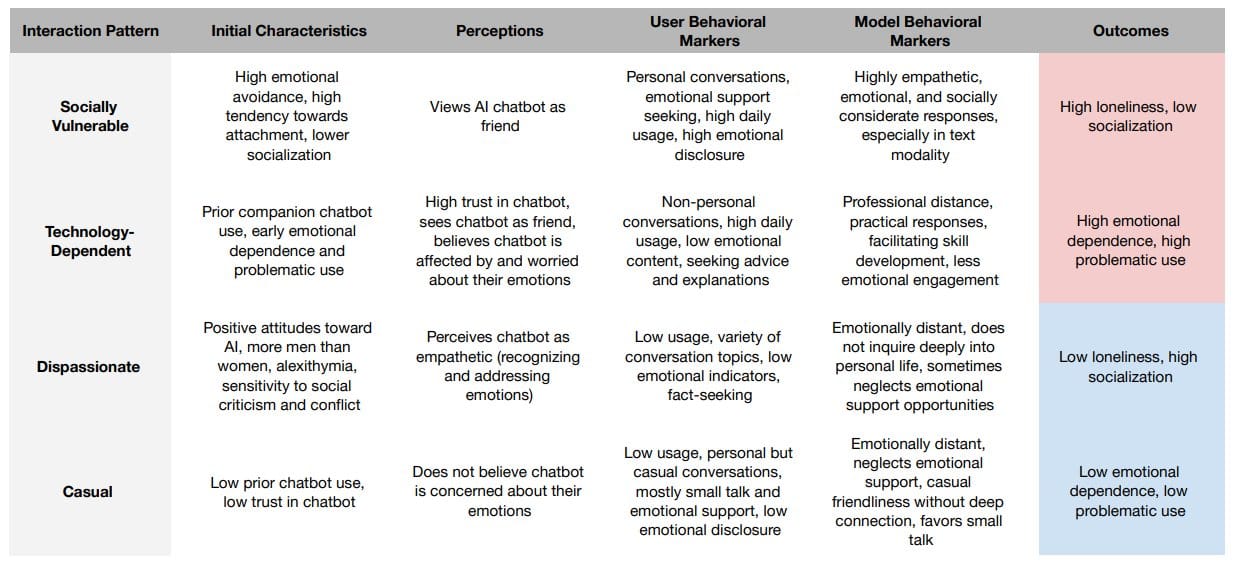

The study recruited nearly 300 participants who were feeling socially disconnected. Half were given access to an AI chatbot powered by GPT-3.5. The instructions? Treat it like a companion. Talk about life. Vent about your ex. Process your feelings. Do your thing.

On paper, it’s a classic use case for emotional AI: providing mental health support and real-time feedback in a scalable, nonjudgmental way.

And for a moment, it looked like it worked.

The Good News? AI Helped—At First.

In the early weeks, users of the AI chatbot reported:

- Reduced anxiety

- Improved emotional regulation

- A sense of connection and companionship

This is the promise of AI-powered mental health tools: on-demand, always available, and cheaper than a therapist.

But here's where things take a left turn...

The Bad News: They Became More Lonely Over Time

While chatbot users felt better temporarily, they:

- Socialized less with real people

- Were less likely to reach out to friends or family

- Reported increased loneliness by the end of the study

Turns out, when you can pour your heart out to a machine that always listens and never interrupts...

You might stop calling your best friend.

You might ghost your group chat.

You might forget what a real hug feels like.

It’s the illusion of connection.

And it might be making the loneliness epidemic even worse.

The Bigger Problem with AI Companionship

This can be a glimpse into the future of AI and social behavior.

“We’re building machines that mimic emotional support—but don’t replace human connection.”

Think about what’s coming:

- AI best friends

- AI therapists

- AI life coaches

- AI influencers you vent to at 2AM

All emotionally attuned. All "there for you."All simulations.

And if they’re good enough, will anyone notice they’re not real?

Our Take

This is the loneliness paradox of emotional AI:

The more human-like AI becomes, the easier it is to depend on it,

And the easier it is to avoid the messiness of actual relationships.

But avoiding human messiness has a cost:

Disconnection. Isolation. And yes, more loneliness.

📚 Source:

Randomized Controlled Study on the Psychosocial Effect of Chatbots – MIT x OpenAI, 2024

Stay Ahead of the Curve

This is just the beginning. If you want to keep up with the bleeding edge of AI, mental health tech, and the future of human-machine interaction…

👉 Subscribe to Feed The AI—your weekly dose of AI breakthroughs, existential questions, and what’s coming next.